I’ve spent the better part of a decade walking onto factory floors where the shop floor looks like the 1990s and the boardroom looks like 2024. The fundamental problem is always the same: you have a massive disconnect between your PLC-driven OT (Operational Technology) layer and your ERP-driven IT layer. When a plant manager tells me they need "Industry 4.0," I stop them right there. I don't want to hear about buzzwords; I want to hear about your ingestion rate and your current downtime percentages.

If you're evaluating partners like STX Next, NTT DATA, or Addepto to bridge this gap, don't let them pitch you on "digital transformation" for six months. I want to know: How fast can you start, and what do I get in week 2? If they can’t show me a concrete architectural sprint in 14 days, you’re hiring a PowerPoint agency, not a data engineering partner.

The Project Mobilization Reality Check

Most consultants treat the first month like a polite conversation. In manufacturing, that’s a death sentence. When we engage, we start with project mobilization immediately. By the end of the first week, I expect to see infrastructure-as-code (IaC) templates hitting your Azure or AWS environment.

Here is what the actual work looks like when you move past the marketing fluff.

Week 1: Discovery Workshops & Environmental Readiness

During the first five days, we aren't just mapping business processes; we are mapping network topography. We are looking at how to get data out of your proprietary MES and into a scalable lakehouse. We look at:

- Connectivity: How do we bypass the "air gap" without compromising security? Platform Choice: Are we betting on Databricks or Snowflake for the compute layer? Or perhaps we are leaning into Microsoft Fabric for its tight integration with existing enterprise stacks? Tooling Selection: How are we orchestrating? If you aren't using Airflow or Dagster for orchestration and dbt for transformation, you’re making your life harder than it needs to be.

Week 2: The "Proof Point" Sprint

By the end of Week 2, I need to see data movement. This is the "Week 2 Deliverable." If a partner like STX Next tells you they are still "finalizing documentation," fire them. You should have a functional pipeline that demonstrates the following:

A successful ingestion of a sample set of telemetry data from a gateway. A clear distinction between your batch ingestion (for historical ERP reporting) and your streaming pipelines (for real-time OEE monitoring). An observability dashboard that shows latency and record counts.Batch vs. Streaming: The Architect’s Dilemma

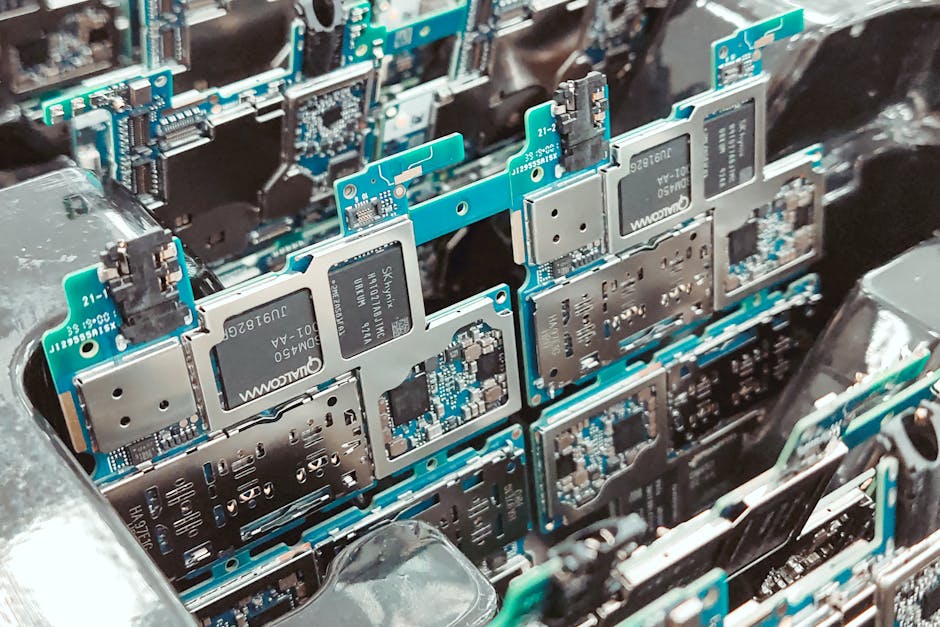

One of the biggest red flags I encounter during a data platform kickoff is when a vendor promises "real-time" without defining the plumbing. You cannot treat IoT sensor data the same way you treat quarterly ERP financial reports. Here is how we break down the architecture:

Data Type Source Pipeline Strategy Tech Stack Recommendation MES/ERP Data SQL/APIs Batch (Incremental) dbt + Azure Data Factory PLC/Sensor Data OPC-UA/MQTT Streaming Kafka + Databricks/AWS KinesisWhen vendors talk about Industry 4.0, they often ignore the complexity of the "middle." You need to land your OT data in a raw zone, cleanse it, and then join it with your ERP data (like work orders or shift schedules) to provide meaningful context. If you don't have this context, your data is just noise.

Evaluating Your Partners: STX Next, NTT DATA, and Addepto

When I review vendors, I look at how they handle the "ugly" parts of integration. Companies like NTT DATA bring massive enterprise scale, which is great for legacy ERP migrations. Addepto tends to lean into the AI/ML side of things, which is fantastic if your data quality is already high. STX Next brings a software-first approach that I’ve found useful when building custom middleware to talk to legacy PLCs that don't support standard protocols.

However, regardless of the brand, look for these three things in their week-two deliverable:

- Observability: Where are the logs? How do I know if a pipeline fails at 3:00 AM? Schema Registry: Are they enforcing structure on your streaming data? Infrastructure-as-Code: Can they destroy and recreate the environment using Terraform?

Why "Real-Time" without Architecture is a Lie

I get annoyed when I hear "real-time" used as a generic marketing https://dailyemerald.com/182801/promotedposts/top-5-data-engineering-companies-for-manufacturing-2026-rankings/ term. Real-time manufacturing data is expensive and difficult to maintain. It requires a robust streaming backbone—typically Apache Kafka or an equivalent cloud-native service. If your vendor tells you they are doing real-time but doesn't mention Kafka, partition keys, or stream processing latency (measured in milliseconds, not seconds), they are building you a slow batch system and calling it real-time.

Your platform needs to handle the heavy lifting of:

- Handling late-arriving data packets from the shop floor. Dealing with intermittent network connectivity in remote plant locations. Maintaining data lineage so you can trace a quality defect back to the specific sensor reading on a specific machine.

Final Thoughts: Demand the Proof

Manufacturing data engineering isn't about pretty dashboards; it’s about downtime reduction, throughput optimization, and energy efficiency. It is a technical discipline, not a creative one. When you head into your discovery workshops, bring a list of your most painful manual data extraction processes and ask the vendor to map those specifically.

Ask these questions in your kickoff:

- "Can you show me how you handle a backfill if the pipeline crashes?" "What is the total cost of ownership (TCO) per gigabyte of ingested sensor data?" "How many records per second can this architecture handle before we hit a performance bottleneck?"

If they can't answer those, you're not building a platform; you're building technical debt. Get them to sign off on a 2-week delivery plan, hold them to it, and if they can't show you the pipeline flowing by day 10, find someone who can.